We have previously discussed some ways to optimize our Qlik applications. If you would like to review that post you may find it here.

I would like to expand this post with some new ideas and techniques for whenever you run into a large and complex application.

First, let’s set the stage for what we are talking about.

What is a large application? When we implemented this technique, we had an application with more than 180 million records in it.

What is a complex application? A complex application has many tabs, charts, expressions, and big formulas utilizing Set Analysis and IF statements when required. For example, we have an initial Dashboard Tab that has 48 expressions on it.

Summarize Data – Document Chain to Transactional Data

Typically in applications like these, there are transactional lines of information. Users want this level of information for those “just in case” scenarios. As a result, applications are tasked with carrying additional lines of information thus hurting their overall performance. Rather than holding this information, it would be best to move away from the transactional view and instead summarize data in a daily view. The daily view will give you access to a “Details” sheet which would allow for ad-hoc report building.

When the selections get to a manageable data row count (50,000?), allow a button to appear on the page. That button will provide a Document Chain path to another application carrying just the ad-hoc report sheet, but with all of the transactional data. This method grants users the ability to drill down into the transactional data without negatively impacting the performance of the main application.

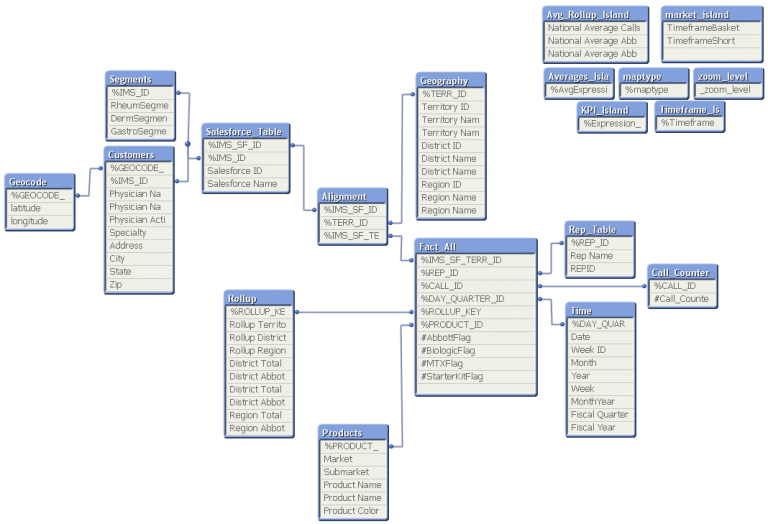

Remove “Link” Tables for Multiple Facts

When an application has multiple fact tables, some developers will implement a “link” table. This shared dimensionality will give you a single table that the Fact Tables connect to via a key. The problem with this step is that it creates a new table for selections to go through, increasing filtering time.

Instead of creating a “link” table, concatenate the fact tables together to create one large table. Even if some fields are unique, the other tables will show a null value in their rows. We have seen a 50% performance boost when we combined 4 fact tables together and removed the “link” table.

Review Server Resources

This is a bit of a rehash from the last optimization topic. It’s extremely important to have server resources that match the application(s) you have on it. The answer isn’t always more RAM, though. When you have a very complex application that has a lot of calculations to go through, a more powerful CPU can positively influence your performance. So when reviewing your server performance, keep an eye on your CPU just as much as your RAM.