When designing quality analytics applications that meet the expectations and requirements of various business users, being specific can mean the difference between collaborative success and argumentative failure. Rising to meet the challenge of satisfying these varied roles can be daunting. Executives, Managers, Business Analysts, Application Designers, Data Modelers and Developers may all be involved. While each of these roles has a different perspective regarding the analytics, each benefits from clarity and specificity. In a word, consumable.

When addressing analytics requests, having a clear process by which business questions can be analyzed can be immensely beneficial. Such a process provides a specific and measurable objective to the developer as well as meaningful and actionable metrics to the business consumer. The process also serves the purpose of not only ensuring the deliverable is accurate but also enables effective and extensible underlying design. The process I’m describing typically follows a path similar to the one below.

Identify the Subject Area

The subject area should represent a pain point for the business or department. Often this is related to annual corporate or departmental goals and objectives. These objectives work to identify an area that requires improvement and distributes responsibility throughout the organization. For our example, let’s pick the Sales Performance area relating to the business objective of improving Sales by a certain percentage, as familiar to most.

Brainstorm the Key Questions

Obtaining these key questions is where the experienced business people are useful. Often these valued perspectives can be useful in identifying critical dials which are strongly associated with historical performance. In our simplified case, we may consider the following as key questions. Remember – this phase is brainstorming, so not a lot of time is spent critiquing.

- How much does our company sell? What are we selling?

- How do we measure sales, in Units or Dollars? Are we considering these figures monthly or annually?

- What are our costs?

- Which stores are key performers ?

- Which regions are most profitable?

- What kind of impact does widget color or size have on sales?

Structure the Key Questions into Metrics/KPIs

Careful attention is now paid to ensuring the metrics are consistently structured. As we think through the above questions, we begin reworking them for consistency.

- How many widgets are sold monthly?

- What are total widget sales in dollars monthly?

- What are total widget costs?

- Which stores have the highest total margin in dollars?

- Which stores have the highest percentage margin?

- Which regions have the highest total margin in dollars?

- Which regions have the highest percentage margin?

- Which color of widget has the highest sales monthly?

- Which color of widget has the highest margin monthly?

Consolidate the common measures and dimensions

The outcome to this point is then deconstructed and consolidated into commonalities. You’ll begin to notice how the structuring of the metrics becomes somewhat redundant, however this redundancy can be generalized in some cases. For example, it seems that a monthly analysis is desired. It may not be required that all the metrics must include this information. Now, it is relevant to recognize that information as a relevant Dimension by which to analyze most if not all data. This same principle could be applied to the combination of measures and dimensions we’ll discover below. This is where the power of multi-dimensional analysis comes to bear. New insights will appear from a clear data structure and thorough design.

This moment also presents a critical opportunity to define how deep our analysis should go. We can fairly easily and inconsequentially presume from needing data at a month level, that aggregating it to a Year level will be a follow-on requirement, but what about the ‘Week’ or ‘Day’ level? Are they relevant? Aggregating data can be rather straightforward, however breaking down the data without prior design consideration can be catastrophic. This is the moment to understand how deep each dimension should report data.

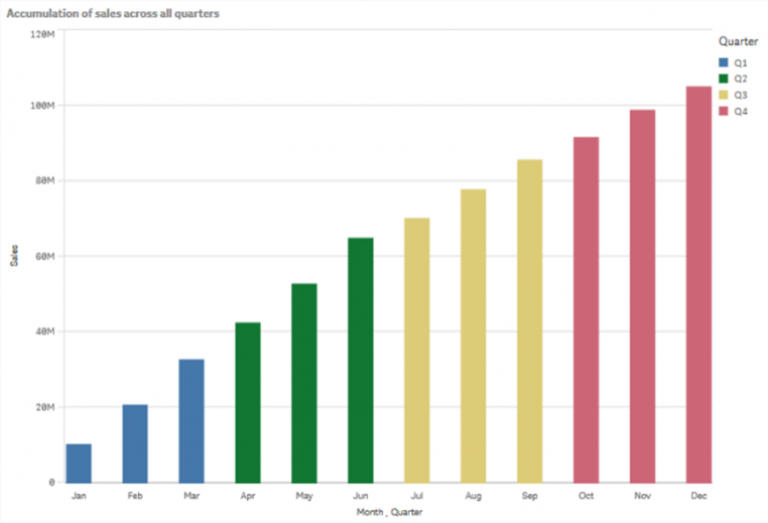

We can glean the following Measures and Dimensions from the above questions regarding Widget Sales Performance:

- Measures

- Sales ($)

- Sales (#)

- Cost ($)

- Margin ($)

- Margin (%)

- Dimensions

- Date {Year > Month}

- Store Geography {Regions > Countries > State > City > Store}

- Product Size {Large, Medium, Small}

- Product Color {Red, Orange, Yellow, Green, Blue, Purple}

Declare the Grain

This step is often overlooked. It can be the source of great headaches to a developer while simultaneously a consequence to the business users. A commitment to the level of analysis required is, perhaps, the most important technical design consideration when creating a data model and its importance cannot be understated. This granularity is defined by the lowest level detail of each dimension in combination with all the others.

Examining the lowest level of each dimension from our example above, we can see that we have the following elements of our granularity already available:

- Month

- Store

- Size

- Color

We can now declare the grain of the Sales Performance Analysis data model to be {Date:Month, Store:StoreID, Size:SizeID, Color:ColorID}, where the respective ID’s are simply data identifiers which can then reference related attributes. For example, the StoreID can then reference the store name, address and subsequently identify its City, State and Region.

Note that the Date and Store Geography dimensions are rich in terms of the number of levels available and we select the lowest level for our grain. For the size and color dimensions, which are flat, we just need to make note for them to be included.

Design implications

So why is this is so important for analytics application design?

From a modeling perspective developers now know they can aggregate transaction level data to a more summary level. If there were 20 orders of Small Blue Widgets sold on 4/12/2015 to Store 4, this can be represented by a single row in a data source rather than the 20 transactions in the source data. The size requirements of the data model are substantially reduced if the grain is not at the transaction level. The speed of the application can now be optimized as well because the processing engine need not carry additional detail if it is not required for the analysis.

From a business consumer perspective, when actionable KPI’s are laid out in a clear and concise fashion, the specificity of the deliverables make progress toward completion both measurable and attainable. The subsequent work of analyzing the data and creating business plans to improve performance is considerably more straightforward.